RAG for commerce catalogs: Grounding LLM Answers in Normalized Product Fields Instead of Merchant Copy

RAG (retrieval augmented generation) is a pattern where a language model generates an answer while referencing retrieved context, rather than relying only on what it memorized during training. The original RAG paper frames this as combining a generator with an external non parametric memory so outputs can be grounded and updated.

In affiliate commerce, RAG fails when the retrieval layer is built from merchant copy alone. Merchants rewrite titles, omit variant details, and use promotional language that confuses identity. Affiliate.com gives you a better retrieval substrate: normalized fields across merchants and networks, so the model can ground answers in barcodes, MPNs, pricing, availability, merchant and network metadata, and canonical links.

Why merchant copy is the wrong ground truth for shopping answers

LLMs are persuasive, but product truth is often non linguistic.

Merchant copy tends to break in three predictable ways:

- Identity drift, where similar titles refer to bundles, older gens, or private label lookalikes

- Deal inflation, where “was” pricing is ambiguous unless you anchor on regular price and final price semantics

- Eligibility mismatch, where the listing exists but is out of stock or not commissionable, so an answer is technically correct but operationally useless

A commerce RAG stack should retrieve fields, not prose.

The retrieval unit should be a product record, not a paragraph

A practical commerce RAG system retrieves structured product records that include:

- IDs: barcode, ASIN, SKU, MPN

- Pricing: currency, regular price, final price, discount, sale price, ship price

- Inventory: in stock, stock quantity, availability, commissionable status

- Governance: merchant ID and name, network ID and name

- URLs: commission URL, direct URL, image URL

This is the difference between “the model sounds right” and “the model can be verified.”

Identity first: barcode, then MPN, then text

Affiliate.com’s own guidance is blunt: barcode is the unique identifier that can verify two listings from two different networks refer to the same product. It is the cleanest way to ground a recommendation that spans merchants, especially when titles do not match.

MPN or SKU has a different job. It is precise for a specific product from a specific merchant, which makes it ideal for debugging or merchant scoped experiences.

Text is still useful, but only for discovery. The any field is designed to cast a wider net by searching across names, descriptions, brands, and categories, then you layer filters to narrow.

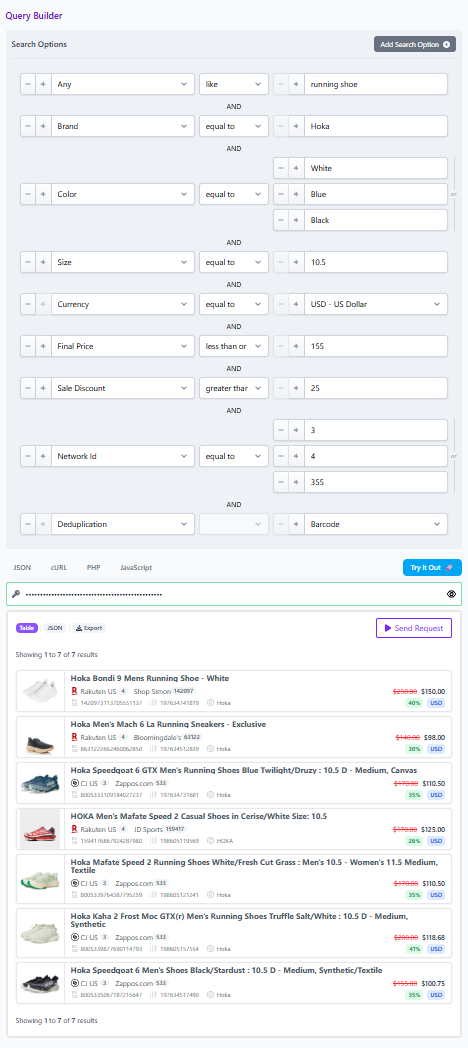

A safe commerce RAG loop you can actually ship

Step 1, let the LLM write the brief, not the answer

Use the model to extract constraints from a prompt:

- Using Any Like intent for a general query

- required attributes (brand, color, size, material)

- business rules (currency, price ceiling, minimum discount)

- governance rules (allowed merchants, allowed networks)

This produces a structured plan without trusting the model on identity.

Step 2, retrieve candidates with any field, then filter

Start broad with any field for recall, then layer precision filters like brand, merchant, and price to narrow results.

This is where Affiliate.com is strongest: searchable experiences built from normalized product data, rather than manual spreadsheet parsing.

Step 3, pin identity for finalists using barcode or MPN

Once you have finalists, switch retrieval to identifiers:

- For cross merchant comparisons, retrieve by barcode so all offers refer to the same physical product.

- For merchant specific validation, retrieve by SKU or MPN.

This step is what prevents “wrong model” answers.

Step 4, choose deduplication based on the question

Deduplication is a product decision that changes the shape of what the model sees.

If enabled, Affiliate.com clusters matching product offers and selects one representative record for display. If disabled, the response includes all matching variants and offers, which is what you want when the user asks “where is it cheapest” or “which merchant has it in stock.”

A simple rule:

- Recommendation list questions: deduplicate on

- Offer comparison questions: deduplicate off

Step 5, generate the answer with field grounded citations

Now the model can explain its reasoning in plain language, but the facts come from fields:

- “Best value” should be based on final price and discount, compared to regular price

- “Available now” should require in stock and, if you use it, commissionable status

- “Same product” should be justified by barcode match, not title similarity

Applied example: answering “What is the best deal on this exact item”

Imagine a user asks: “Is this Amazon item cheaper elsewhere”

A robust RAG path looks like this:

- Retrieve Amazon context using ASIN, then map to barcode when present, since barcodes are universal while ASIN is Amazon specific.

- Run a barcode query to retrieve the exact same product across multiple merchants.

- Turn deduplication off so you see all offers for that barcode.

- Filter to one currency before comparing prices, and filter to in stock so the answer is actionable.

- Sort by final price, and optionally use discount to highlight true price drops.

The model can then answer in a way that is both human and defensible: it can name the merchant with the lowest final price, note which offers are in stock, and explain that all offers share the same barcode, so the comparison is identity safe.

Make it supportable: use Query Builder share links as the audit trail

RAG systems create a new operational need: reproducibility. When a result looks wrong, support needs to replay the exact retrieval.

Affiliate.com’s Query Builder supports sharing a link that opens to the query and populates the specified products, which is a perfect “receipt” for a RAG output.

Treat the share link as the canonical artifact:

- Keep it alongside your prompt template

- Use it for editorial review

- Use it for debugging missing offers or unexpected merchants

Turn One High Intent Question into a Deterministic Retrieval Pattern

Start by building your first commerce RAG retrieval set in Affiliate.com’s Query Builder: use any field for discovery, layer brand and price filters, then pin finalists by barcode and test deduplication on and off. When the result set is correct, share the query link as your ground truth receipt, then wire the same retrieval pattern into your LLM application so answers stay tied to normalized fields, not fragile merchant copy.